Some of these softwares are OutWit Hub, ParseHub, etc. First, the software that one installs locally on thier PC to carry out the process. Software for web scraping can be of two types. Let us discuss the methods and those services.

Also, there are various services available on the internet. There are two methods through which web scraping can be accomplished. Whereas, the pictorial or video data can be directly saved in the local hard-disk. More often, when the data carried out from any website is tabular, the best thing is to save it in an excel file in. It is obvious that copy-pasting data from several sites manually can take hours and days, but through web scraping, all the process automates thus, saving a lot of time. A question arises from here that if one can manually copy and paste data from a website, then what is the need of web scraping? This data can then be saved in a local hard-drive or in multiple database frameworks or spreadsheets. The term web scraping refers to pulling out of data from different websites. For this purpose, web scraping comes up with the second to none solution.

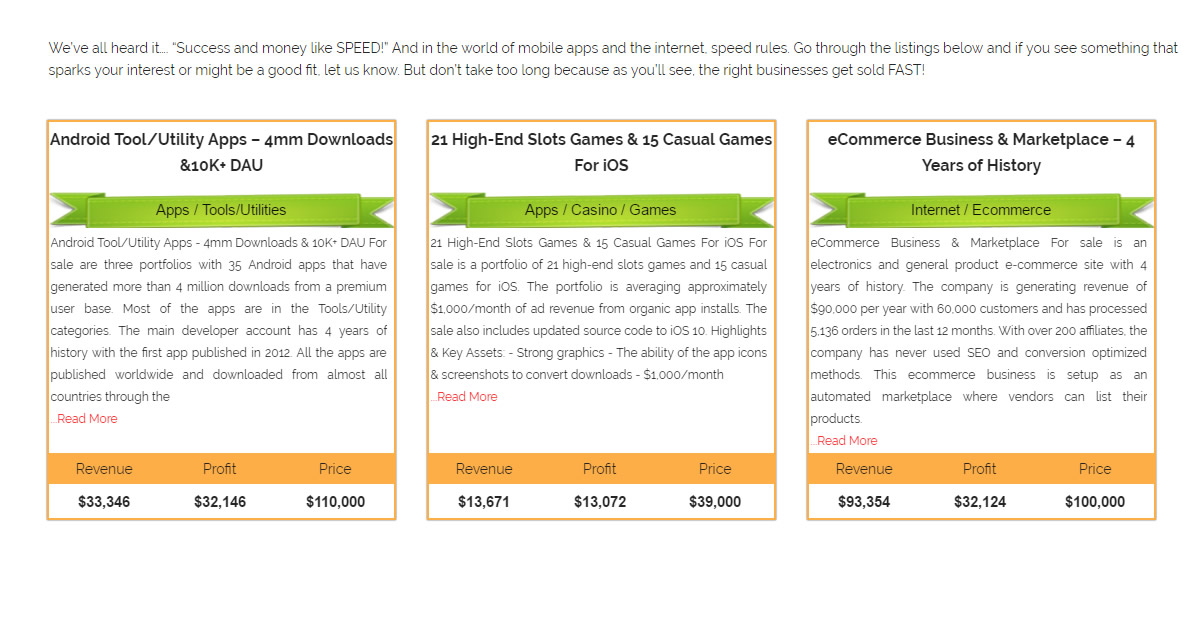

In this modern fast and steady world, the more quickly and efficiently the data is extracted, the best it would be. Extracting data from different sources has become a challenge for data engineers and scientists.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed